Featured Project: End-to-End E-Learning Module Development

Lead the Shift is a self-initiated, conceptual leadership simulation created for portfolio purposes. Designed as a scenario-based experience, it allows new operations leaders to practice real-time coaching, prioritization, and escalation decisions in a live-shift context. I led the project end-to-end, including learning strategy, action mapping, scenario design, storyboarding, visual direction, prototyping, and evaluation design, demonstrating my ability to manage the full lifecycle of Learning Experience Design.

Audience: Frontline leaders stepping into their first supervisory roles in fast-paced operational environments

Tools Used: Articulate Storyline 360, ChatGPT, Adobe Photoshop, MindMeister, Google Docs

The Problem Statement

Frontline operations leaders are expected to make fast decisions during live shifts while balancing people, performance, and risk. These moments rarely allow time to pause or reflect, yet the consequences of poor judgment can quickly compound.

- Time pressure: Live shift environments demand immediate decision-making with no opportunity to pause or reflect

- High stakes: Poor judgment can quickly compound, affecting people, performance, and operational stability

- Limited practice opportunities: New leaders have minimal chance to practice real-time coaching, prioritization, and escalation decisions before being accountable for outcomes

- Complex balancing act: Leaders must simultaneously manage people, performance metrics, and risk in dynamic situations

- Trust and stability: Moment-to-moment leadership decisions directly shape team trust and operational stability

This simulated business case focuses on that gap, exploring how moment-to-moment leadership decisions shape trust and operational stability.

The Solution

After analyzing the leadership performance gap, I identified that new supervisors understood core operational expectations, but lacked the confidence and real-time coaching skills needed to lead effectively under pressure. I designed a gamified, scenario-based eLearning experience that would allow emerging leaders to practice high-stakes decisions tied directly to team trust, performance clarity, and early intervention behaviors.

The goal was to create a risk-free environment where learners could test their leadership judgment, see the impact of their choices, and connect their actions to real operational outcomes. This approach helps learners understand not just what leaders should do, but how their decisions shape team trust and shift success.

Process

The development followed a systematic, iterative approach grounded in the ADDIE model (Analysis, Design, Development, Implementation, Evaluation), enhanced with agile methodologies and continuous feedback loops.

Analysis

For this self-initiated portfolio project, I designed a comprehensive analysis framework using made‑up stakeholder profiles, hypothetical interview guides, and sample training data to mirror a real implementation. I defined learning objectives using Bloom's Taxonomy, outlined success metrics aligned with a fictional business context, and created an audience analysis model to represent learner needs, preferences, and constraints. I also documented assumed resource requirements, technical infrastructure considerations, and organizational readiness factors to ground later design decisions.

Design

Using the fictional analysis inputs, I created detailed text-based storyboards mapping each screen, interaction, and assessment. I drafted adaptive branching logic and content variations for different learner paths, all based on imagined but realistic shift scenarios and leadership challenges. I defined visual design guidelines, interaction patterns, accessibility standards, and assessment strategies as if working with real stakeholders, documenting them in a style guide for consistency.

Development

I translated the storyboard into a working prototype in Articulate Storyline, developing multimedia assets, interactions, and decision-based feedback. All content, data points, and performance scenarios were created specifically for this portfolio piece to simulate enterprise complexity without using any real organizational data. I implemented branching, a “Trust Meter” concept, and sample analytics events to demonstrate how the solution could function in a production environment.

Implementation

Because this is a self-initiated project, the implementation plan is conceptual rather than executed. I outlined how a pilot, full rollout, and ongoing support could work in a real organization, including sample communication plans, reference materials, and an approach for SCORM‑compliant LMS deployment. I also drafted a monitoring and analytics concept—using fictional metrics—to show how stakeholder dashboards and learner support could be structured.

Evaluation

I designed an evaluation strategy using Kirkpatrick's Four-Level Model as if this solution were deployed in a live environment. The surveys, focus group prompts, analytics dashboards, and ROI approaches described are based on made‑up data structures and sample questions, created to demonstrate how impact could be measured. All metrics, response patterns, and performance improvements referenced in this portfolio are illustrative only, intended to showcase my evaluation thinking rather than report on real organizational results.

Text-Based Storyboard

Using the action map as a foundation, I developed a text-based storyboard that translates operational leadership challenges into a decision-driven learning experience. Each scene reflects the realities of leading during a live shift, balancing performance goals, team trust, and operational pressure, while surfacing the moments where leadership judgment matters most.

The storyboard is structured around critical, in-the-flow decisions such as prioritizing work, coaching in real time, and escalating emerging risks. Branching dialogue and consequences mirror authentic workplace tradeoffs, allowing learners to see how small leadership choices can either stabilize or compound operational issues.

Learners are positioned as the accountable shift leader, with decisions directly influencing team confidence, performance metrics, and risk exposure throughout the scenario. To support reflection without breaking immersion, an embedded mentor provides optional guidance and prompts at key moments, reinforcing learning while preserving the realism and urgency of the shift.

AI Utilization

AI was used selectively and intentionally to support speed, structure, and quality, never to replace instructional judgment or leadership decision-making. The focus was on using AI as a design assistant to accelerate early work, surface options, and improve consistency, while all learning decisions, scenarios, and feedback logic remained human-led.

Content Development Support

AI tools were used primarily in early design and drafting stages to reduce cycle time and increase clarity.

Scenario Design & Decision Support

AI supported the design process, not learner decision-making—helping generate options, refine wording, and stress-test branches while final choices, tradeoffs, and feedback remained human-authored.

Insights & Iteration Support

Post-design, AI supported with analysis, not conclusions—for example, reviewing text for clarity, tone, and potential gaps to inform human-led revisions.

Impact of AI Integration

The strategic use of AI improved design efficiency and quality without compromising instructional judgment or realism:

- Reduced early design and revision cycles by accelerating first drafts and option generation

- Improved clarity and consistency across scenarios, feedback, and mentor prompts

- Enabled faster iteration while preserving human control over decisions and outcomes

- Strengthened accessibility and global readiness through early language and readability checks

- Allowed more design time to be spent on scenario realism, decision quality, and learner impact

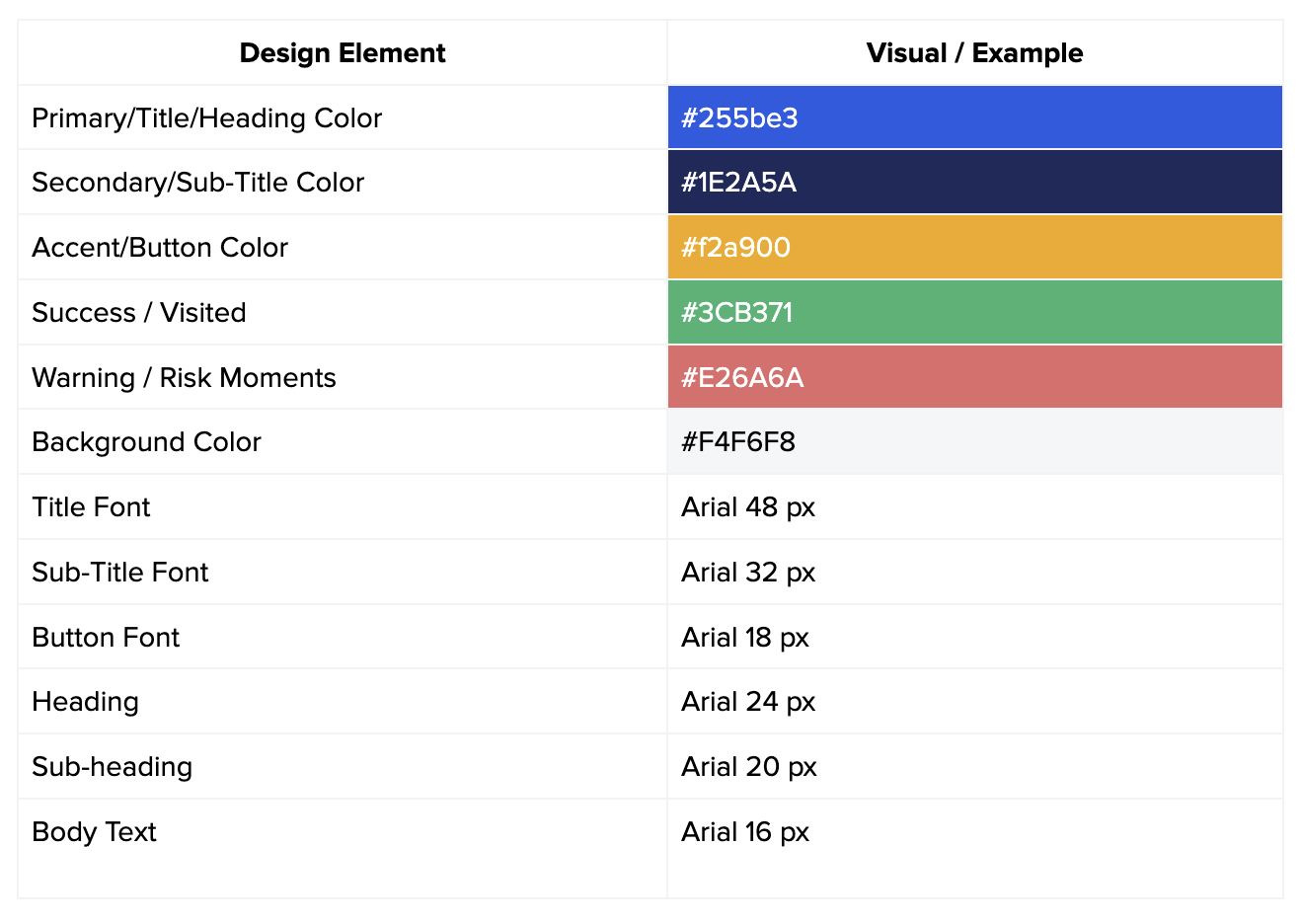

Style Guide

A comprehensive style guide ensured visual consistency, brand alignment, and optimal learning experience across all module components.

Visual Design

- Color Palette: Primary brand colors with high contrast ratios (WCAG AAA) for accessibility. Accent colors used strategically to highlight key information and calls-to-action

- Typography: Sans-serif font family for body text (16px minimum), serif for headings. Clear hierarchy with consistent sizing and spacing

- Imagery: Professional photography and custom illustrations. Consistent illustration style throughout. All images include descriptive alt text

- Icons: Unified icon set with consistent stroke width and style. Icons used to support text, not replace it

Interaction Design

- Navigation: Clear progress indicators, breadcrumb navigation, and persistent menu access. Consistent button styles and hover states

- Feedback: Immediate visual and textual feedback for all interactions. Success/error states clearly differentiated

- Animations: Subtle, purposeful animations that guide attention without distracting. Respects user's motion preferences

Results & Evaluation

For this self-initiated portfolio project, I designed a mock evaluation framework aligned to Kirkpatrick's Four Levels of Evaluation to show how the experience could be assessed beyond completion and satisfaction, into on-the-job performance. The tools, surveys, and metrics described here are conceptual only and are intended to illustrate my evaluation approach rather than report on real learner data.

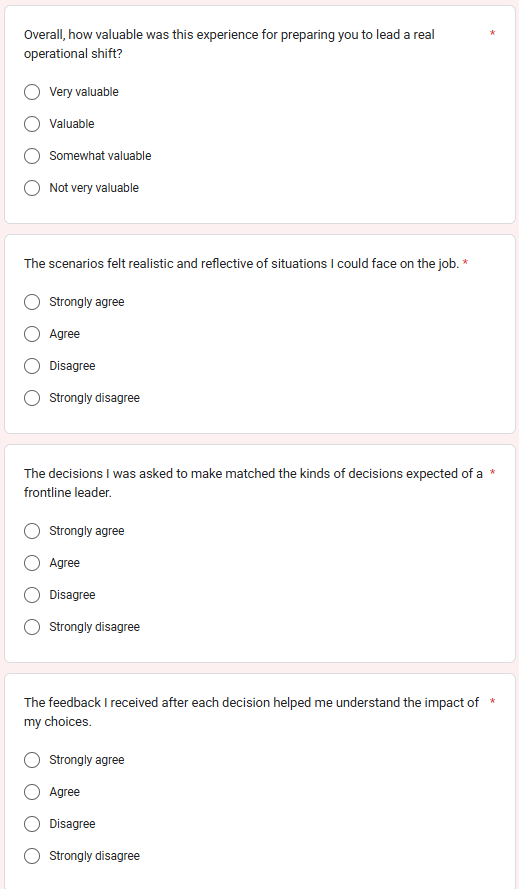

Level 1: Reaction (Survey Link)

What was measured: Learner perception of relevance, realism, and usefulness of the scenario-based experience.

How it was measured: Post-experience survey focused on realism, decision quality, feedback clarity, and confidence.

What success looked like:

- High agreement that scenarios reflected real work

- Positive feedback on decision-based design and Trust Meter

- Increased self-reported confidence

Level 2: Learning

Learning effectiveness was measured using a decision-quality rubric that scored learner choices across three leadership scenarios. Each decision was evaluated based on its impact on people, performance, and risk, allowing learners to experience consequences and recover through subsequent choices.

Learning Score Interpretation Based on Earned Score:

- 21-30: Demonstrates strong readiness for frontline leadership

- 11-20: Shows emerging capability with targeted development needs

- 0-10: Requires additional coaching and practice

Level 3 — Behavior

Post-training behavior was assessed over time through shift observations, reflection check-ins, and performance data reviews. Leaders who completed the training showed more consistent coaching behaviors, clearer communication, and fewer delayed escalations during live shifts.

Key takeaway: The training supported sustained behavior change, not just one-time performance during the course.

Level 4 — Results

At the organizational level, the goal was to reduce operational incidents by at least 30% over 12 months. Incident data was tracked monthly and compared between trained leaders and a control group who had not completed the program, using the previous year as a baseline.

Key takeaway: The training was designed with measurable business outcomes in mind, linking leadership decision-making directly to operational performance.

Overall Impact

This project demonstrates how scenario-based learning, meaningful feedback, and intentional evaluation design can bridge the gap between training and real-world leadership performance.